In a previous post we talked about Hybrid Interfaces, where you can have both a membrane switch and a touch sensor, on one device, to get the best of both worlds. As technologies evolve the options become endless to get an HMI exactly how you want it. Did you know that it is now possible to have multiple types of touch sensors on one HMI?

Our friends over at SigmaSense® asked if we would manufacture a touch screen with 4 different types of touch areas to test out a new capability that their touch controller offers. It worked! The best part is, all 4 areas can be touched at once.

The SigmaDrive® technology eliminates the need for equal length sensor traces, uniform impedance so the sharing of a channel for multiple sensors is now possible.

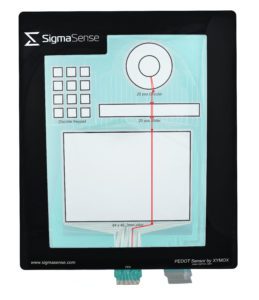

This new test sensor features a full matrix, discrete keypad, slider, and pinwheel slider; 4 sensor zones on the same sensor. A single RX trace can run continuously through 3 different sensors (see below image; traces run through the matrix, slider, and wheel areas).

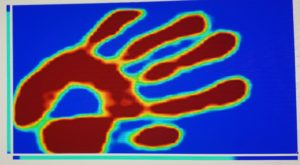

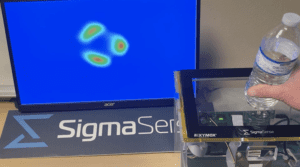

Capacitive Imaging is the heart of the SigmaSense controller; it creates a “capacitive image” of the sensor by measuring the capacitance at every RX/TX junction simultaneously, 300 times per second. This Capacitive Imaging, with SigmaSense high fidelity data, enables machine learning and AI based functions such as object recognition, orientation of an object and even who is touching the object. These new human machine interfaces (HMIs) unleash vast new capabilities in the industry and allow for some new uses of the traditional PCAP sensor..

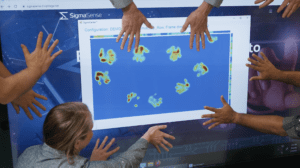

Infinite touch points – Well, kind of; the number of touch points is only limited by the number of nodes in the sensor. Gone are the days of only 10 or 20 touch points.

Object Identification – Capable of sensing objects on the sensor

The SigmaSense controller and the new-found ability to run traces through multiple touch areas opens up a whole new world of possibilities! You can see this sensor in action on the SigmaSense Feature Montage starting at the 2:12 mark.

What are you working on where multiple touch points or different sensor areas would be useful in your application?